PromptKD: Unsupervised Prompt Distillation for Vision-Language Models

A simple and effective prompt-driven unsupervised distillation framework for VLMs, with state-of-the-art performance.

Highlights

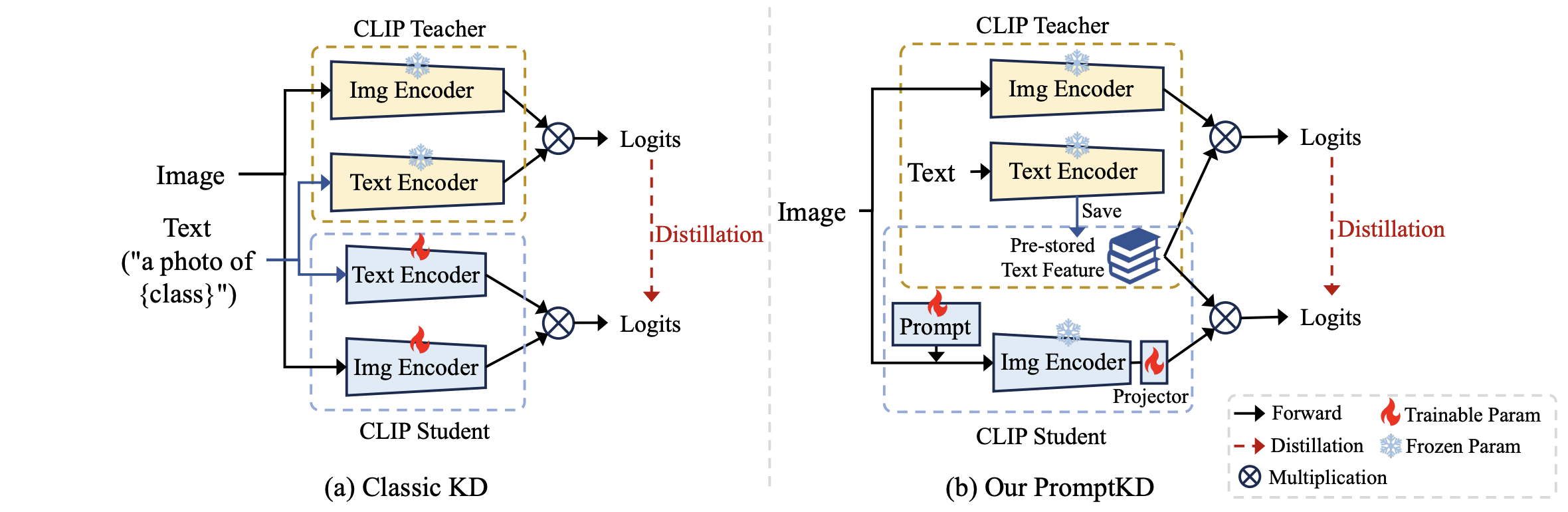

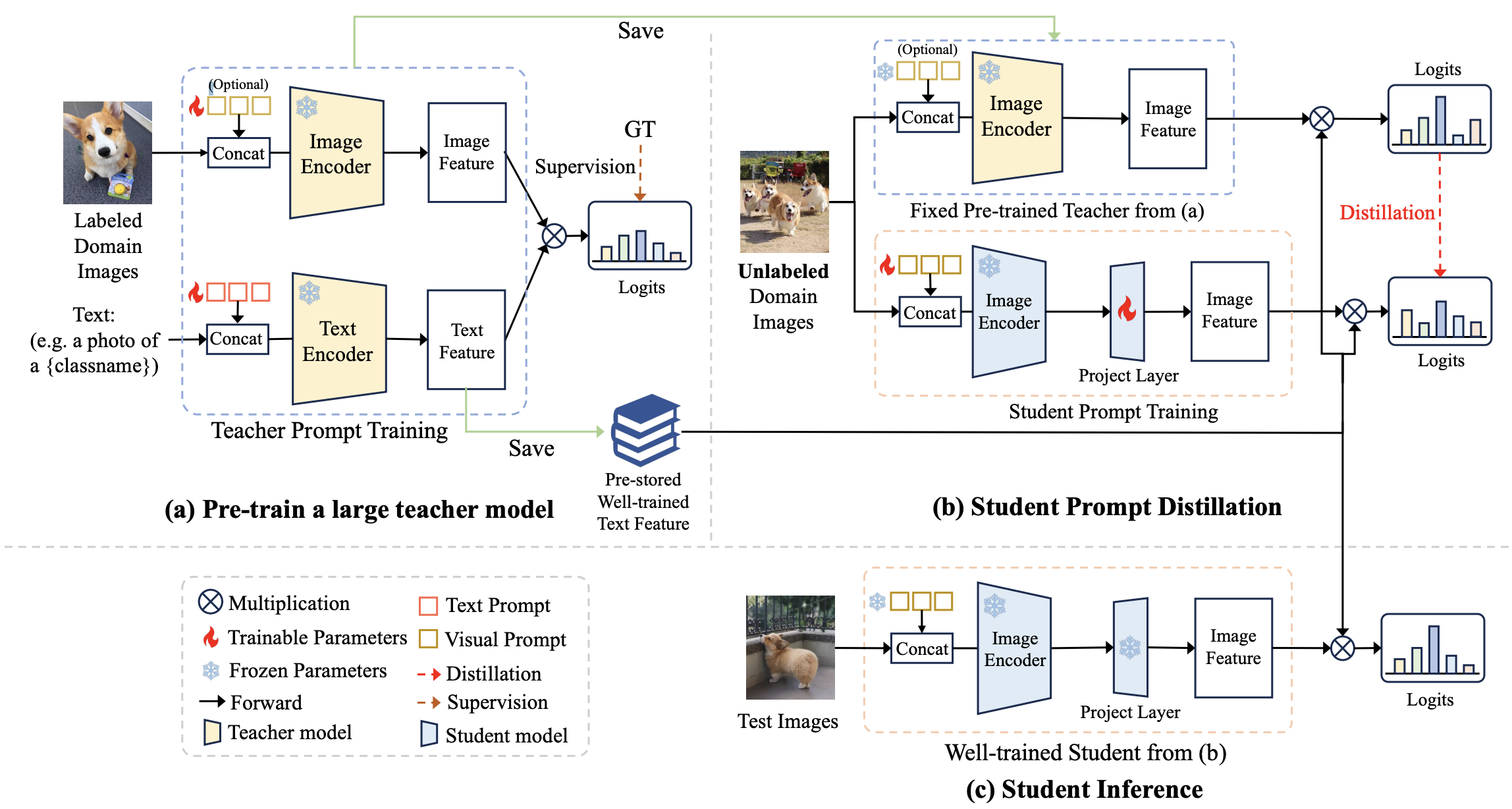

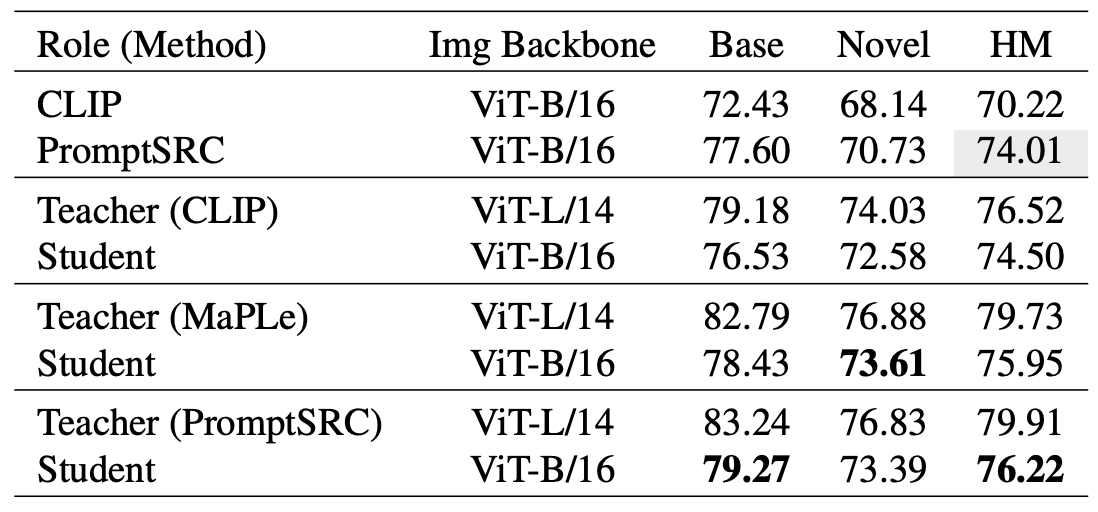

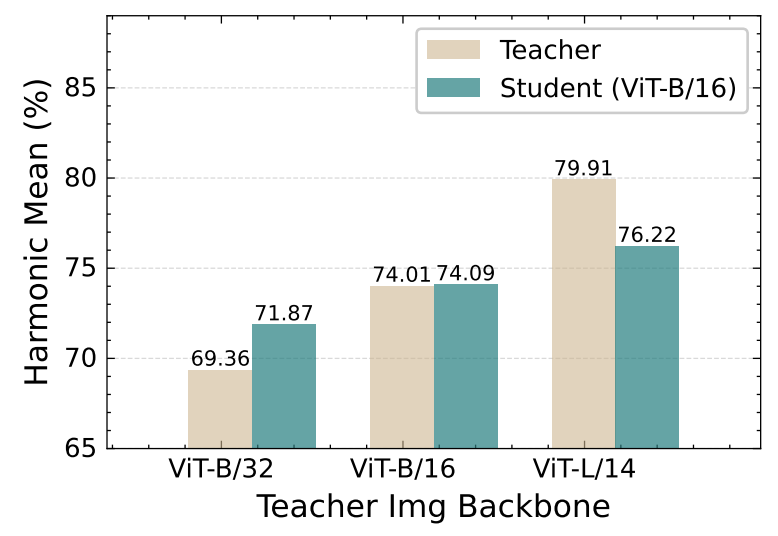

(1). A novel two-stage unsupervised prompt distillation framework for Vision-Language Models.

(2). Reuse high-quality teacher text features instead of training the student's own text encoder.

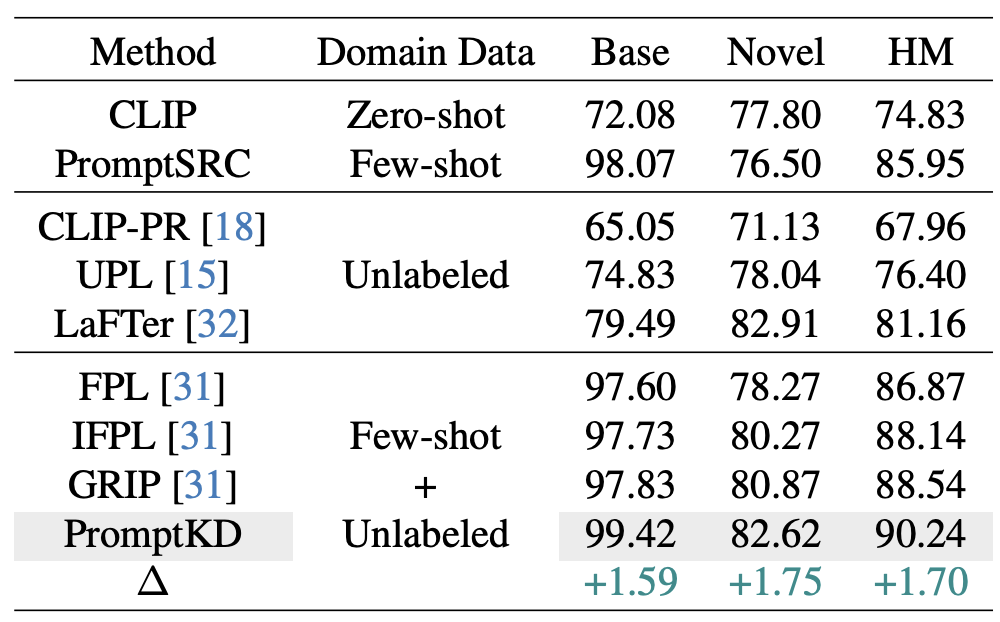

(3). Distillation on large amounts of unlabeled domain images using soft labels provided by the teacher.

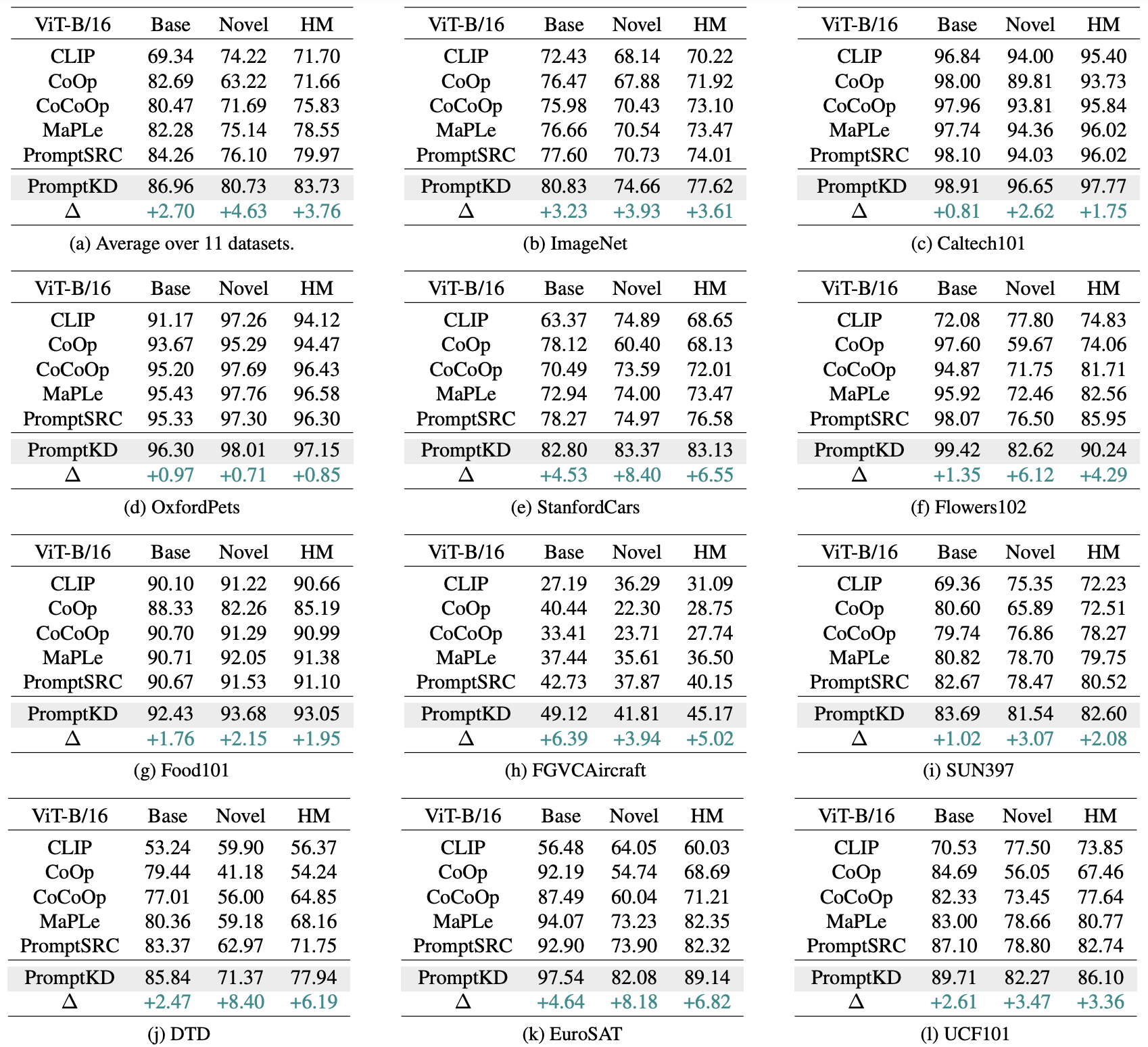

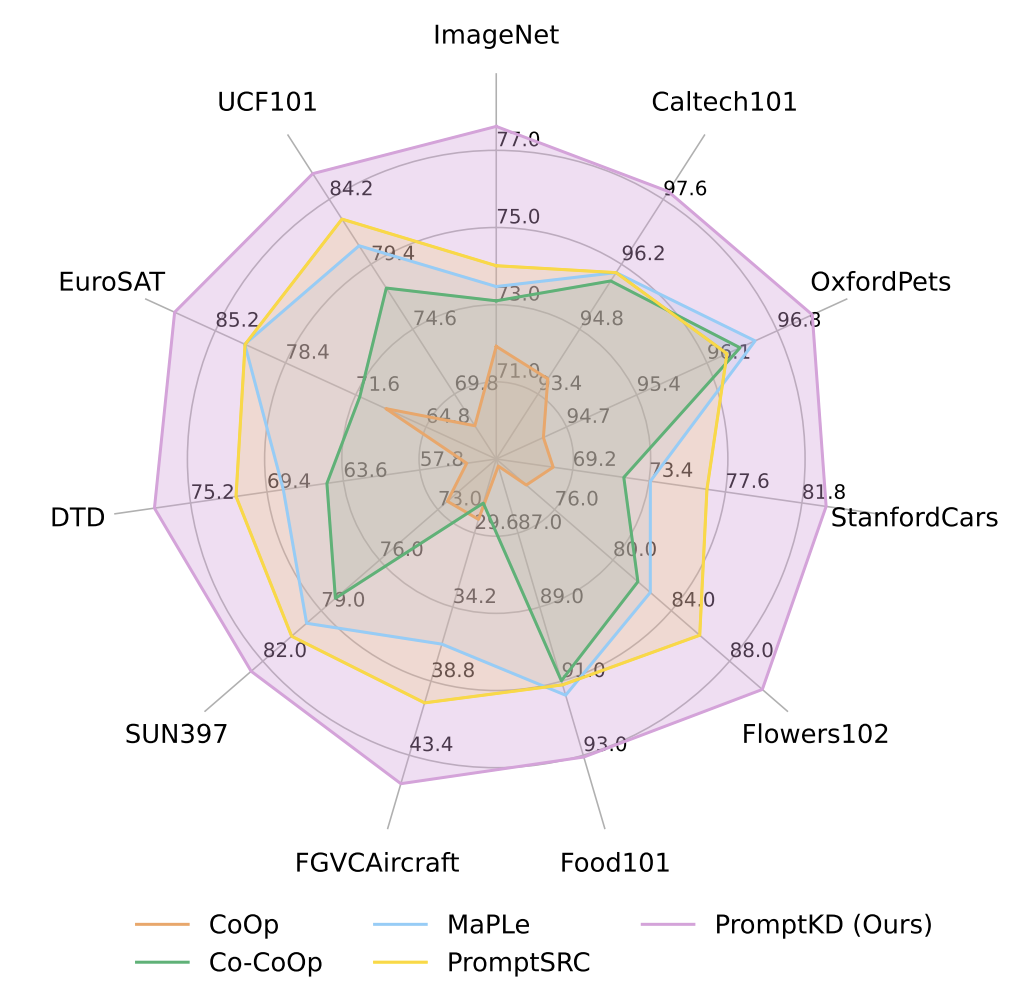

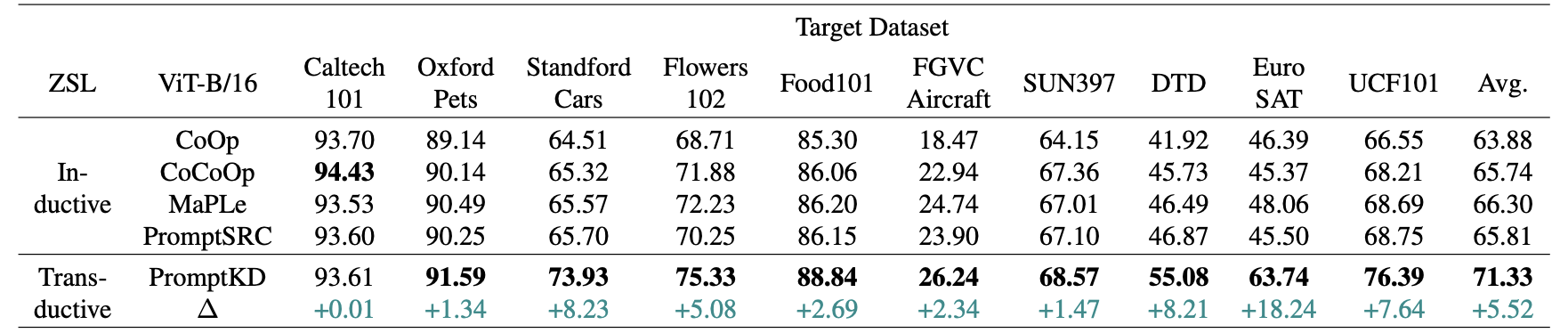

(4). PromptKD outperforms all existing prompt learning methods on 11 diverse recognition datasets.